Computers & Internet

Renew the certificate at RapidSSL (or look around for a new vendor)

In the end, all that is needed is to copy the following into /etc/ssl/localcerts

a) private key file (.key)

b) certificate file which is created by cut and pasting first the regular certificate and then the intermediate certificate

Then, run the checks below to make sure everything is working correctly.

Then restart nginx:

sudo /etc/init.d/nginx restart

Note: I had some weird permission issues to it is easiest to just edit the actual files rather than try to create new ones.

Todo next time: Investigate whether it is worth the effort to generate a CSR (certificate signing request) on our server. Also, consider using Let’s Encrypt

Checking that the Private Key Matches the Certificate

The private key contains a series of numbers. Two of those numbers form the “public key”, the others are part of your “private key”. The “public key” bits are also embedded in your Certificate (we get them from your CSR). To check that the public key in your cert matches the public portion of your private key, you need to view the cert and the key and compare the numbers. To view the Certificate and the key run the commands:

$ openssl x509 -noout -text -in server.crt $ openssl rsa -noout -text -in server.key

The `modulus’ and the `public exponent’ portions in the key and the Certificate must match. But since the public exponent is usually 65537 and it’s bothering comparing long modulus you can use the following approach:

$ openssl x509 -noout -modulus -in server.crt | openssl md5 $ openssl rsa -noout -modulus -in server.key | openssl md5

And then compare these really shorter numbers. With overwhelming probability they will differ if the keys are different. As a one-liner

:

$ openssl x509 -noout -modulus -in server.pem | openssl md5 ;\ openssl rsa -noout -modulus -in server.key | openssl md5

And with auto-magic comparison (If more than one hash is displayed, they don’t match):

$ (openssl x509 -noout -modulus -in server.pem | openssl md5 ;\ openssl rsa -noout -modulus -in server.key | openssl md5) | uniq

BTW, if I want to check to which key or certificate a particular CSR belongs you can compute

$ openssl req -noout -modulus -in server.csr | openssl md5

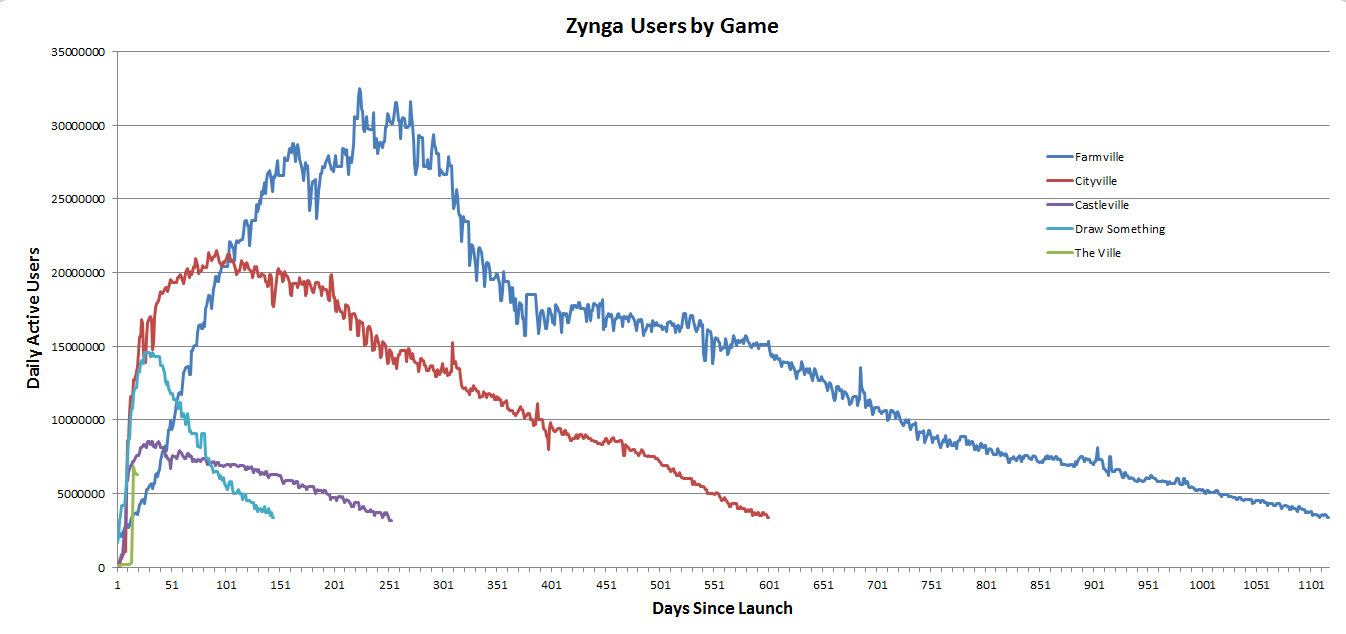

Each successive Zynga game peaks earlier but with less users. Farmville -> Cityville -> Castleville must have been alarming. And then they bought ‘Draw Something’ right at the peak. It’s going to be tough to keep filling the bucket.

What’s difficult to see on the graph is that Zynga’s Sims rip-off, The Ville, appears to have already peaked at aroudn 6.3M daily active uniques.

Spent some time tuning my MySQL database for a small website (~2K users per day). MySQL Tuner was recommending that we increase the size of the query cache above 16M but we were dubious. The relevant metrics according to this article are:

- Hit rate    = Qcache_hits / (Qcache_hits + Com_select)

- Insert rate = Qcache_inserts / (Qcache_hits + Com_select)

- Prune rate = Qcache_lowmem_prunes / Qcache_inserts

In our case we had gathered the following stats over a 48 hour period:

| Com_select                       | 1163740  |

| Qcache_hits                      |  531650  |

| Qcache_inserts                  | 1021165  |

| Qcache_lowmem_prunes    |   82507  |

| Qcache_not_cached            | 142575   |

| Qcache_queries_in_cache   | 2145       |

| Qcache_total_blocks          | 5643       |

| Qcache_free_blocks           | 1175       |

| Qcache_free_memory        | 11042672 |

So for our database:

- Hit rate    = 24%

- Insert rate = 60%

- Prune rate =Â Â 8%

We’re not too sure what to make of this. A hit rate of 24% doesn’t seem to bad but our insert rate is also quite high. For now, we’re leaving the query cache as is. Especially since the comments in the post mentioned above suggest that making it larger than 20M is futile.

We have written previously about the outsourcing of the web stack. In this post, we will add more color on why the outsourcing of the entire web platform makes sense. While developers have gravitated en masse to offerings like Heroku, there is still a wider lack of appreciation for why PaaS is a major trend.

In this post, we are going to set aside the wider question of the economics of running your application on a PaaS versus hosting and maintaining your own servers. Our aim is to describe what constitutes a PaaS and how it differs from IaaS (such as Amazon Web Services) and other SaaS offerings like Salesforce.com.

The Four Pillars of a PaaS

- No installation required. Whether your application is written in Ruby on Rails, Python, Java or any other language de jour there should be no need to install an execution environment when deploying your application to a PaaS. Your code should run on the platform’s built-in execution engine. While minor constraints are necessary, our view is that the successful PaaS providers will largely conform to the language specifications as they are in the wild. This ensures portability of your application between platforms and other hosted environments.

- Automated deployment. A single click or command line instruction is all that stands between the developer and a live application.

- Elimination of middle-ware configuration. Tweaking settings in Apache or Nginx, managing the memory on your MySql instance, and installing three flavors of monitoring software are now in the past.

- Automated provisioning of virtual machines. Application scaling should happen behind the scenes. At 3am. Without breaking a sweat.

There are a few other characteristics of the new breed of PaaS services which we would regard as optional components of a platform but which greatly enhance its utility. By integrating other components into the web stack and constraining these to a few, well-curated and proven bundles, a PaaS offering can both consolidate services into a single bill but, perhaps more importantly from a developer’s point of view, ensure inter-operability and maintain a best-of-breed library. Heroku has done a great job of facilitating easy deployment of application add-ons such as log file management, error tracking, and performance monitoring.

There is often confusion as to the difference between PaaS and SaaS: a PaaS offering is an outsourced application stack sold to developers. A SaaS offering is a business application typically sold to business users.

The difference between PaaS and IaaS is more subtle and over time the dividing line is likely to blur. Today, the PaaS platforms begin where the IaaS services leave off: IaaS effects the outsourcing of the hardware components of the web stack. PaaS platforms effect the outsourcing of the middleware components of the web stack. It is the abstraction of the repetitive middleware configuration that has caught the imagination of developers. PaaS saves time and expedites deployments.

It is a great time to be a web software developer. Over the last decade the components of web development which have little strategic advantage to a start up have gradually been eliminated and outsourced to such an extent that today the gap between writing code and deploying a new application is often bridged with a single click.

Whereas ten years ago deploying a new application required provisioning a new server, installing Linux, setting up MySQL, configuring Apache, and finally uploading the code, the process today has dramatically less friction. On Heroku, one powerful command line is now all that stands between a team of developers and a live application:

> git push heroku master

Let’s take a closer look at what is happening. The code residing in the repository is uploaded directly to, in this example, Heroku’s cloud platform. From that point onward, the long list of tasks involved in maintaining and fine-tuning a modern web stack are outsourced. The platform provider handles hard drive failures, exploding power supplies, denial-of-service attacks, router replacement, server OS upgrades, security patches, web server configuration … and everything in between.

The implications of this trend are bound to be far-reaching. As common infrastructure is outsourced to vendors such as Amazon, Rackspace, Google and Salesforce.com, the base of customers for hardware and stack software will become increasingly concentrated. As the platform vendors function both as curators and distributors of middle-ware for associated services such as application monitoring and error logging, new monetization opportunities will arise for those companies, such as New Relic, providing these tools.

Just as the arrival of open-source blogging platforms eliminated the intervening steps between writers and audiences, so the new breed of platforms has reduced the friction between developers and their customers.

Most importantly, though, the barriers for new private companies to compete have been permanently lowered. Today, $100 per month can buy you a billion dollar data center.

As unstructured file data increasingly resides in cloud file systems, there is a large component that is still missing: Drag & Drop.

Currently, it is not possible to drag a file from Box.net to Salesforce.com or any other cloud service, without first downloading the file to my desktop and then re-uploading it. This problem is compounded on mobile devices such as the iPad because there is no easily accessible local storage or ‘Desktop’ equivalent.

Solving this problem will be more of an engineering challenge than meets the eye. Every cloud service has implemented their own storage protocol and folder system. Second, there is the even larger problem of authentication. Hopefully it will soon be possible to easily tile two browser windows and drag from one cloud service to another. Until then, we will keep on downloading and re-uploading.

Postscript: I have concluded that a single online repository for all my files is a pipe-dream. As the Microsoft monopoly is broken apart, there is going to be increasing fragmentation of cloud services.

I used Ubuntu 10.04 so that I know I don’t need to upgrade for the next four years.

1. Follow Linode’s excellent ‘Getting Started‘ instructions.

2. Add a new user and add them to the sudoers file.

3. Use Josh’s ‘Railsready‘ script to install Ruby etc.

Rather than using RVM to create gemsets, I prefer to ‘Vendor Everything‘, so I didn’t use RVM to install Ruby.

4. Install Passenger (this will also install nginx)

In this way you can obtain the list of the ten oldest processes:

ps -elf | sort -r -k12 | head -n 10

To sort processes by memory usage use “Shift M” when running.

Use ‘c’ to show full path for command.

For other useful ‘top’ configurations.

If you’re getting 502 Bad Gateways from your WordPress site when using Nginx then the following cron job might help:

Add a .sh script with this content and set it to trigger every ten minutes:

#!/bin/bash

PHPCOUNT=`ps aux | grep ‘.php.sock’ | grep ‘php5.cgi’ | wc -l`

echo $PHPCOUNT

while [ “$PHPCOUNT” -eq 0 ]

do

/etc/init.d/nginx startphp

PHPCOUNT=`ps aux | grep ‘.php.sock’ | grep ‘php5.cgi’ | wc -l`

done

That should check the number of processes, and if it’s not correct restart them.

The script is /root/nginx_php.sh

The cronjob is installed under root, and this is the content:

ps19952:/var/spool/cron/crontabs# crontab -l

MAILTO=””

*/10Â Â Â Â *Â Â Â Â Â Â *Â Â Â Â Â Â *Â Â Â Â Â Â *Â Â Â Â Â Â /bin/sh /root/nginx_php.shps19952:/var/spool/cron/crontabs#

Stacy Smith, Intel’s CFO, has some interesting data on the tipping point for PC market penetration. As the cost of a PC in a region moves from multiple years to 8 weeks of income, the penetration changes from zero to about 15%. Once the cost drops below 8 weeks of income, the penetration rises very rapidly to 50%.

According to Smith, the cost of a PC in both India and China is now below 8 weeks of income in those countries.